Extreme Ensemble under Uncertainty

The development of robust design strategies coupled with detailed simulation models requires the introduction of advanced algorithms and computing resource management tools.

On the algorithmic side, we explore the use of simplex-based stochastic collocation methods to characterize uncertainties, and multi-objective genetic algorithms to optimize a large-scale, three-dimensional geometry using a very large number (a so-called "extreme ensemble") of CFD simulations on HPC clusters. In this article, we concern ourselves with the optimization under uncertainty of a Formula 1 tire brake intake to maximize cooling eciency and to minimize aerodynamic resistance.

The uncertainties are introduced in terms of tire deformation and free stream conditions. The simulations environment Leland has been developed to dynamically schedule, monitor and stir the calculation ensemble and to extract runtime information as well as simulation results and statistics. Leland is equipped with an auto-tuning strategy for optimal load balancing and fault tolerance checks to avoid failures in the ensemble.

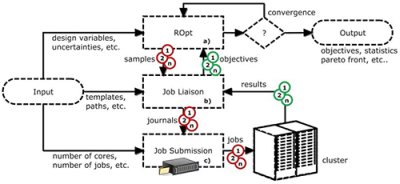

Fig. 1 - Leland flowchart

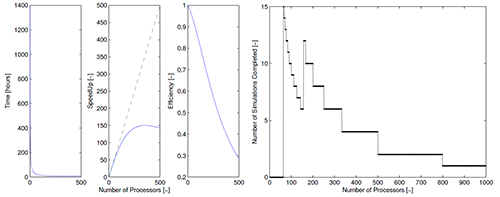

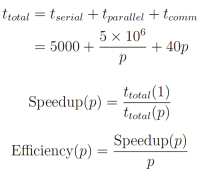

(a) Total time required to complete simulation as a function of the number

of processors (left), Speed-up curve (middle), and Eciency curve (right)

(b) Number of simulations that would be completed in a 24 hour window

with 1000 available processors using exactly p processors for each simulation

Introduction

In the last few years, clusters with 10,000 CPUs have become available, and it is now feasible to design and optimize complex engineering systems using computationally intensive simulations. This development highlights the need to create resource managers that deliver cost-effective utilization with fault tolerance. The BlueGene/L cluster with 65,536 nodes was designed to have less than one failure every ten days. In fact, this cluster and others like it, experience an average of one processor failure every hour. In light of this, it is necessary to study, develop, and to continually improve strategies for the ecient completion of large simulations. Theoretical work has been published in the literature that suggests that advanced algorithms might be available although they have only been demonstrated using test functions on a small number of compute nodes. The design process involves running an extreme number of large computations or "extreme ensemble" (in the range of thousands), in order to create a robust solution that will remain optimal under conditions that cannot be controlled ("uncertainties"). We call this process optimization under uncertainty. The ensemble is a list of runs generated by the optimization and uncertainty analysis algorithms that is dynamic in nature and not deterministic. This means that the number of additional simulations is dependent on the results of the prior converged simulations.

In this article, we explore the computational design of a Formula 1 tire and brake assembly using large-scale, threedimensional Reynolds-Averaged Navier-Stokes simulations on a high performance computing cluster. The purpose of designing the brake duct is to increase the amount of air captured by the duct while minimizing the total drag of the tire. This multi-objective optimization problem is tacked using a genetic algorithm which produces a Pareto front of best solutions. An uncertainty analysis of 4 specific points on the Pareto front (minimum drag, maximum cooling, best operating point or trade-off, and baseline F1 tire geometry) is summarized in the results section of this paper. Some of our future work will include a study on how uncertainties can be invasively incorporated in the optimization procedure, producing a probabilistic Pareto front rather than analyzing the sensitivity of the deterministic Pareto due to uncertainties. For such a study, approximately 400 simulations have to be performed per optimization cycle (i.e. generation). When the results of these 400 simulations are analyzed, an additional list of 400 simulations, each with a unique range of input parameters, will be created for the next generation of the optimization process. The values of these input parameters are not known a priori.

The optimization procedure needs to account for uncertainties arising from variables in ow conditions as well as from variability in the flexible tire geometry. This complex baseline geometry consists of 30 million mesh cells. In order to generate an optimal design under uncertainty, the mesh is deformed locally, creating 5000 unique simulations to perform. Each simulation (or realization) will be run on our in-house cluster using 2400 cores; the full design process should take approximately 2 weeks to complete.

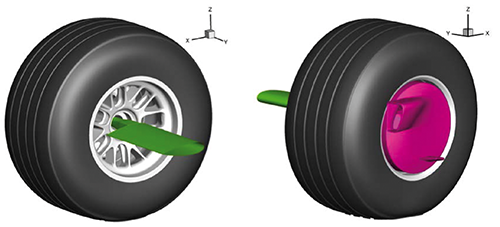

Fig. 3 - Front right tire of the Formula 1 race car used in this study showing green airfoil strut used to secure tire to the experimental wind tunnel facility and the outer brake duct (magenta) used to cool the brake assembly

The second part of this article is dedicated to the development of a software platform able to reduce the total time needed to carry out an engineering design process such as the one described above. Leland, the simulations environment we have developed, allows us to schedule the resources, to monitor the calculation ensemble and to extract runtime information as well as simulation results and statistics on the y. Leland is equipped with an autotuning strategy for selecting an optimal processor count. Moreover, an implemented fault tolerance strategy ensures that a simulation or a processor stall is detected and does not impact the overall ensemble finish time. The results of this study document the actual computational time savings achieved through the efficient use of resources with Leland, as opposed to submitting individual jobs on the cluster, one at a time, using traditional queue managers (e.g. Torque, SLURM, etc.).

Robust Design Algorithm

The impact of uncertainties in the robust design process is characterized using the Simplex Stochastic Collocation (SSC) algorithm, which combines the effectiveness of random sampling in higher dimensions (multiple uncertainties) with the accuracy of polynomial interpolation. This approach is characterized by a superlinear convergence behavior which outperforms classical Monte Carlo sampling while retaining its robustness. In the SSC methods, a discretization of the space spanned by the uncertain parameters is employed, and the simplex elements obtained from a Delaunay triangulation of sampling points are constructed. The robustness of the approximation is guaranteed by using a limiter approach for the local polynomial degree based on the extension of the Local Extremum Diminishing (LED) concept to probability space. The discretization is adaptively refined by calculating a refinement measure based on a local error estimate in each of the simplex elements. A new sampling point is then added randomly in the simplex with the highest measure and the Delaunay triangulation is updated. The implementation of advanced algorithms to improve the scalability of Delaunay triangulation in higher dimensions, in order to circumvent the curse of dimensionality, has not been fully investigated as part of this study. There are proofs in literature that show that Delaunay triangulation can achieve linear scaling with higher dimensions.

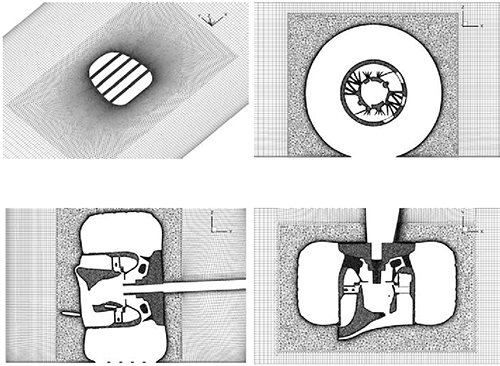

Fig. 4 - Four different views showing the Formula 1 tire mesh

(a) Isometric view of ground plane showing contact patch

(b) Streamwise cut plane showing mesh inside rotor passages

(c) Spanwise cut plane showing full brake assembly

(d) Top view of plane cutting through the center of the tire

In this work, we analyze a nontrivial multi-objective problem within which it is not possible to find a unique solution that simultaneously optimizes each objective: when attempting to improve an objective further, other objectives suffer as a result. A tentative solution is called non-dominated, Pareto optimal, or Pareto ecient if an improvement in one objective requires a degradation of another. We use the NSGA-II algorithm to obtain the nondominated solutions, hence we analyze the more interesting solutions on the deterministic Pareto set in presence of uncertainty. Our goal here is to prove the importance of considering the variability of several input conditions in the design process. For all these solutions, the SSC is used to obtain a reconstruction of the objective function statistical moments, refining the simplexes until an accuracy threshold is reached.

Dynamic Resource Manager - Leland

The structure of Leland is based on a workflow through I/O sub-systems that represent the software applications (i.e. Sculptor, Fluent, Tecplot, Matlab etc.) involved in the process. This environment is designed to run natively on any high-performance computing (HPC) system, by integrating with the job-submission/queuing system (e.g. Torque). Moreover, it does not require continuous management: once the analysis is initiated, multiple simulations are submitted and monitored automatically. In Leland, a job is an instance of the entire multi-physics simulations, which might include grid generation, mesh morphing, flow solution and post-processing. The main objective of Leland is to set-up a candidate design as a job, to manage it until it is completed and to gather relevant results that are used to inform the optimization under the uncertainty process. ROpt (robust optimum), shown in Figure 1a, is the engine behind this design environment.

Fig. 5 - Brake duct optimization variables

(a) Brake duct width

(b) Brake duct height

(c) Brake duct length

Given the design and/or uncertain input variables, the ROpt continuously generates new design proposals (samples) based on the evolutionary strategy and/or analysis of the uncertainty space, until a convergence criterion is met. The Job Liaison shown in Figure 1b, defines the characteristics of each single job and continuously monitors the progress of the simulations until completion, in order to communicate the objective evaluations back to the ROpt. It is the job of this module, to continuously monitor for faults, stalls, or errors, to ensure that the total runtime is not detrimentally affected by processor/memory failure.

The Job Submission engine, shown in Figure 1c, ensures that the correct number of jobs is always running on the cluster. The variables (number of cores, number of jobs, etc.) from the input file that are used to initialize the runs are dynamic, which means that they can be edited on the y and the system will respond accordingly. Leland has the ability to dynamically select the optimal number of processors to run per realization. This is achieved by auto-tuning. The user selects an optimal window of cores to use per realization prior to launching the full ensemble. The auto-tuning algorithm then samples the space by using a unique number of cores per realization in the ensemble. Once two or more realizations are complete, the autotuning algorithm can start to construct an application-specific speed-up curve (Figure 2). Speed-up is defined as the total time required to finish the simulation using 1 processor divided by the total time required to finish the simulation using p processors (see Equation 2).

The speed-up curve in Figure 2 was generated by artificially replicating an HPC simulation. The time required to complete an HPC simulation is primarily a function of three factors: i) portion of the code that is not parallelizable (tserial in Equation 1), ii) portion of the code that is parallelizable (t parallel in Equation 1) and iii) the communication time between CPUs (tcomm in Equation 1). The serial portion of the code in the example illustrated in Figure 2 is constant (5000 seconds) and not a function of the number of processors allocated to the job. The length of time required to complete the parallel portion of the code in the example shown in the same figure, is 5 million seconds divided by the number of processors used. Finally, there will always be some latency between CPUs and this is characterized by the communication time between nodes. The linear penalization we used in this example is 40 seconds per processor, but the latency slowdown could also be a more complex function related to the specific application.

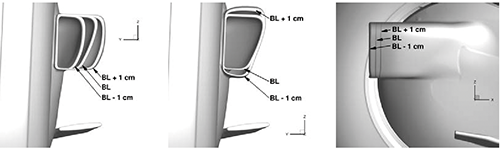

Fig. 6 - Subset of uncertain variables tested for sensitivity in output quantities of interest

(a) Brake duct width

(b) Brake duct height

(c) Brake duct length

Linear speed-up, also referred to as ideal speed-up, is shown as the green dotted line in the middle plot of Figure 2. An algorithm has linear speed-up if the time required to finish the simulation halves when the number of processors is doubled. It is common for fluid dynamic simulations to experience speed-down; this occurs when the total time required to finish the simulation actually rises with an increasing number of processors. Leland has the ability to recognize the point at which speed-down occurs (at about 400 processors in Figure 2) and never uses more than this number of processors. The rightmost plot in Figure 2 shows the eciency (defined by Equation 3) curve for this artificial HPC simulation. The eciency typically ranges between values of 0 1 estimating how well utilized the processors are compared to the effort wasted in synchronization and communication. It is clear from this plot, that the highest eciency occurs with the lowest number of processors.

This speed-up curve will guide Leland's auto-tuning algorithm in assigning the optimal number of cores per realization (which may not be in the user's original window). Since an ensemble of this size takes more than a few weeks on a large cluster, multiple job submissions need to be submitted to the local queuing system. These jobs are typically limited to 24 hour runtimes (or a wall clock time of 24 hours). Thus, it is essential that the auto-tuning algorithm recognizes how many hours remain prior to the job terminating due to the wall clock time, and tries to increase the number of cores to finish as many realizations as possible within a specific time frame.

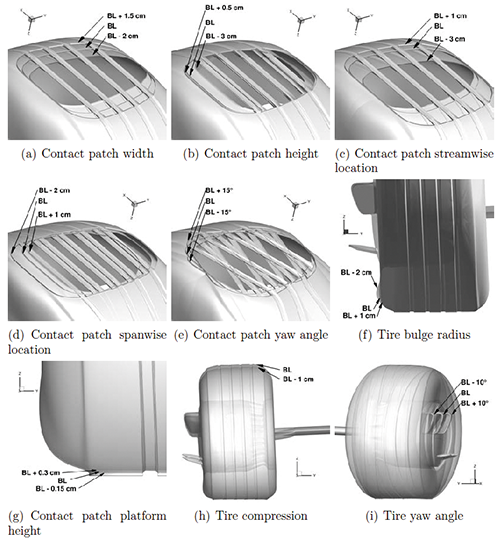

Fig. 7 - Wake sensitivity (shown by streamwise x-velocity contours for

a plane located 1.12 wheel diameters downstream from the center of the tire)

for a simplified tire with wheel fairings (top left), baseline F1 tire (top right),

baseline F1 tire with blocked hub passages (bottom left), and simplified tire

with artificial mass eux from blue segment (bottom right)

Application Description

Leland is used here to optimize the shape of a F1 tire brake duct (magenta color in Figure 3(b)), taking into account the geometrical uncertainties associated with the rotating rubber tire and uncertain inflow conditions. The objectives are to minimize the tire drag [N] while maximizing the captured mass flow (kg/s) needed to cool the brake assembly. A computational mesh consisting of 30 million elements is considered for a fully detailed 3D wheel model (Figure 4). The simulations that require geometrical modification (for optimization for uncertainty) are created using Sculptor, a commercial mesh deforming software from Optimal Solutions. The software is used to generate multiple CFD mesh model variants, while keeping CAD and grid generators out of the design process loop, thus saving design time and costs substantially. The generated models are then used to compute the air flow around the tire geometry by a parallel CFD solver (Fluent v12.1.4). It is important to closely monitor the skewness of elements in Sculptor to ensure grid quality. If the deformation in Sculptor is too large, the CFD solver will diverge. The boundary conditions, computational setup, and experimental comparison for this case are outlined in separate studies.

Optimization Variables

A local mesh morphing software, Sculptor (v2.3.2), was used to deform the baseline Formula 1 brake duct (Figure 3). Specific control volumes were used to deform the brake duct in three dimensions, namely i) width of opening (Figure 5(a)), ii) height of opening (Figure 5(b)) and iii) protrusion length (Figure 5(c)). Each design variable was allowed to change by 1cm as depicted in Figure 5.

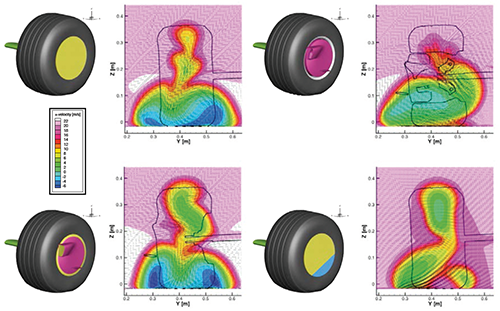

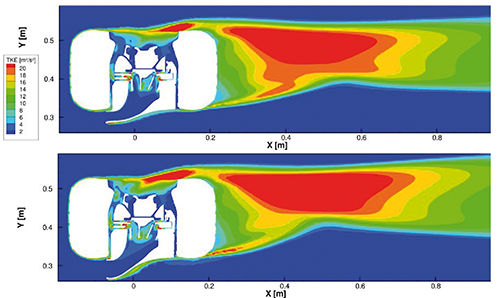

Fig. 8 - Turbulent kinetic energy contours for the minimum drag configuration (top)

and maximum cooling configuration (bottom)

Uncertain Variables

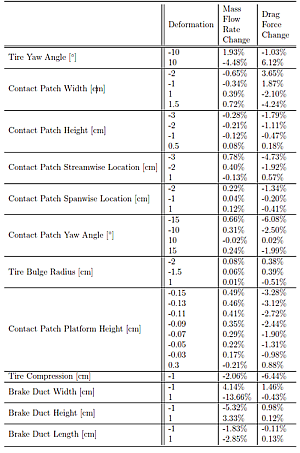

Multiple uncertain variables were tested to determine their sensitivity to output quantities of interest using a DOE (design of experiments) approach. Some of the uncertain variables were based on the in flow conditions (i.e. yaw angle, turbulent intensity, turbulent length scale) while others were based on geometric characteristics of the tire (i.e. contact patch details, tire bulge radius, camber angle). Figure 6 shows 9 geometric modifications which were performed. Each subfigure shows the minimum, baseline F1 tire geometry, and maximum deformation for each uncertain variable.

From the results of a purely one-dimensional perturbation analysis, the turbulence length scale (on the order of 0m 2m) results in less than a 0.1% difference in both, the mass flow rate through the brake duct and the overall drag on the

tire. Conversely, both the mass flow rate and tire drag are very sensitive to the turbulence intensity. The mass flow rate decreased by 7.8% compared to the baseline (less cooling) with 40% turbulence intensity, and the tire drag increased by 7.2% with 40% turbulence intensity. This analysis confirms that the car performance decreases with "dirty" air compared to "clean" air.

The sensitivity of the output quantities of interest caused by the tire yaw angle is reflected in the first row of Table 1. The remaining rows in Table 1, show the sensitivity of mass flow rate and drag force to geometric characteristics, specifically contact patch, tire bulge radius, tire compression, and brake duct dimensions. In the end, the three most sensitive uncertain variables, namely tire contact patch width, tire yaw angle, and turbulence intensity, were selected for the optimization under uncertainty study. The tire contact patch width was able to expand and contract up to 1cm, the tire yaw angle varied between 3, and the turbulence intensity varied between 0% and 5%.

Results

Formula 1 engineers are interested in primarily three factors related to tire aerodynamics: i) overall tire lift and drag ii), cooling performance of the brakes, and iii) how the tire air flow affects downstream components (wake characteristics). All three factors are tightly coupled which makes the design quite complicated, especially when uncertainty in the flexible tire walls and upstream conditions can negatively effect the car performance.

Figure 7 illustrates the wake sensitivity caused by flow traveling through the tire hub and exiting from the outboard side of the tire. If the flow of air is not allowed to pass through the tire hub (see top left and bottom left images in Figure 7), there is no mass eux from the outboard side of the tire and the wake is quite symmetric about the wheel centerline. The wake is dominated by a counterrotating vortex pair and both the inboard (left) and outboard (right) vortex are of similar size. Alternatively, if the flow of air is allowed to pass through the tire hub, the inboard (left) vortex becomes larger than the outboard (right) vortex causing wake asymmetry (see top right and bottom right images in Figure 7).

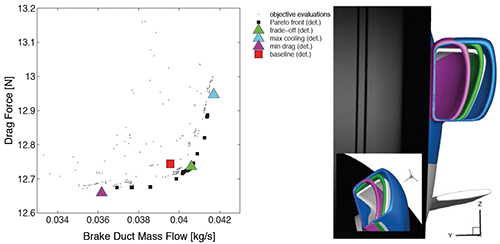

Fig. 9 - Deterministic Pareto front (left); the green, blue, magenta, and gray brake

ducts in the subfjgure on the right correspond to the trade-off, max cooling, minimum drag,

and baseline configurations respectively

The results of the single parameter perturbations indicated previously show that the mass flow rate through the brake duct and tire drag force are more sensitive to the brake duct width than the brake duct height or length (in the range of deformation between 1cm). The physical explanation of this result becomes evident when visualizing iso-contours of turbulent kinetic energy around the tire. Figure 7 presents the difference between a low width configuration (top) and high width configuration (bottom). The larger width of the brake duct causes a larger separation region immediately behind the brake duct in addition to higher turbulence levels in the shear layer immediately behind the inboard back edge of the tire.

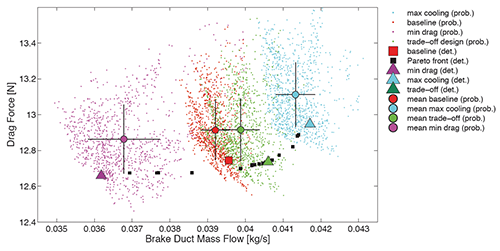

Fig. 10 - Pareto frontier for F1 wheel assembly showing the variability of the

minimum drag (magenta), baseline (red), trade-off (green), and maximum cooling

(blue) designs to uncertainty in the in flow conditions and exible tire geometry.

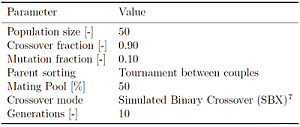

The Pareto frontier showing the optimal brake duct designs under no uncertainty are illustrated in Figure 9. Ten generations, which equates to 450 simulations, were needed to eventually construct the Pareto frontier. Further details about the optimization strategy can be found in Table 2. This table reports the settings of the NSGA-II algorithm adopted to drive the main phases of the genetic algorithm: selection 6 (e.g. mating pool, parent sorting) and reproduction (e.g. crossover and mutation). Leland was used to handle the job scheduling and management and as a result, the time required to complete the 450 simulations was 2 days compared to about 4 days without using Leland, which requires submitting jobs manually to the job queuing system using a constant number of processors. Among the Pareto set (see Figure 9), the design that achieves the highest mass flow rate is shown in blue and the design that achieves the lowest overall drag on the tire is shown in magenta. The green design is labeled as the tradeoff design, since this design tries to achieve the highest mass ow through the inlet of the brake duct while minimizing the total drag on the tire. The baseline geometry, reported in red, was shown not to be on the Pareto front in the deterministic setting.

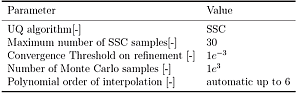

In the previous results, once the tire configuration and other input conditions are specified, the solution is uniquely determined without vagueness. On the other hand, when uncertainties are present, the results have to be expressed in a non-deterministic fashion either probabilistically or as ranges of possible outcomes. The approach we followed here using the SSC is strictly nonintrusive, in the sense that the existing tools are used without modifications, but the solution - or more precisely, their probability distributions - are constructed performing an ensemble of deterministic analyses. Further details about the uncertainty quantification strategy can be found in Table 3.

The variability of the four geometries described above (namely trade-off, highest mass flow, lowest drag, and baseline) as a result of the uncertainties in the tire yaw angle, turbulence intensity, and contact patch width, are presented in Figure 10. The variability of the minimum drag design is the highest, as illustrated by the spread of magenta dots, followed by the maximum mass flow design shown by blue dots, trade-off design presented by green dots and baseline design reflected by red dots. The colored dots in this figure represent the mean probabilistic values and the black lines represent 1 standard deviation of the probabilistic distribution. It is evident in this figure that the optimal designs, on average, move away from the Pareto frontier, decreasing the overall performance of the racing car.

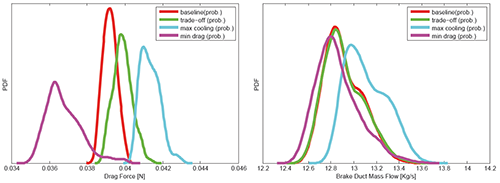

A similar conclusion can be drawn when we look at the probability density of the drag force and the brake mass flow (Figure 11). The former shows a large excursion of both, the position of the peak and the support, while the latter is only marginally affected. This directional sensitivity under uncertainty with respect to drag force might suggest that only the drag minimization could be treated as a probabilistic objective, while the brake mass flow optimization can be handled using conventional (deterministic) optimization. Since the solutions identified above move away from the deterministic Pareto, the optimization process cannot be decoupled from the uncertainty quantification process. We plan to tackle the joint problem in a future study.

Fig. 11 - PDF's of the output quantities of interest used for this study

Conclusions

In this work, we introduced an ecient method to perform massive ensemble calculations with application to a complex Formula 1 tire assembly optimization case. Special attention has been placed on the creation of an effective resource manager to handle the large number of computations that are required. Since the geometrical uncertainties associated with rubber tires and inflow uncertainties associated with upstream "dirty" air, have an impact on the dominating solutions, their presence has to be taken into account in the design process. The next step of this study is to consider the presence of uncertainties invasively in the optimization procedure, generating a probabilistic Pareto front rather than analyzing the sensitivity of the deterministic Pareto due to uncertainties.

Acknowledgments

The authors would like to acknowledge first and foremost Sculptor Optimal Solutions, specifically Taylor Newill and John Jenkins, for their generous support, training, and licenses. The authors wish to thank Dr. J. Witteveen for providing the initial version of the Simplex Stochastic Collocation algorithm and Steve Jones and Michael Emory for helping with the resource allocation manager. The authors also thank Toyota Motor Corporation - F1 Motorsports Division for providing the original geometry used in this study.